Paul Boddie's Free Software-related blog

Paul's activities and perspectives around Free Software

Resource Autocompletion in Roundcube

June 21st, 2013

In a previous article, I described my experiences setting up Kolab for groupware functionality on Debian Wheezy. One of the problems I encountered was that of searching for resources when creating events, and it didn’t seem possible to start typing the name of a resource and to have the details autocompleted. Given that Kolab integrates Roundcube webmail with other services including LDAP directories, and given that Roundcube seemed happy to look up people in such directories, I suspected that fixing this problem would probably involve refining the search criteria for each search performed when a key is pressed in the participant field of the event dialogue (or in the recipient field of the compose mail screen).

After some digging in the source code for the purpose of getting some familiarity with what goes on inside Roundcube, I found a guide to LDAP address books in Roundcube that mentions some of the queries one might expect to happen when autocompletion is taking place. And, sure enough, such information can be found in the /etc/roundcubemail/main.inc.php file provided by the Kolab-related Debian packages. So it then becomes a matter of specifying some other queries to permit resources to be found as well as people.

My solution to the problem, which may not be the most appropriate (so I welcome corrections and comments), is to add another address book provider as follows:

$rcmail_config['ldap_public'] = array(

...

'kolab_resources' => array(

'name' => 'Global Resources',

...

'base_dn' => 'ou=Resources,dc=example,dc=com',

...

'LDAP_Object_Classes' => array("top", "mailrecipient"),

'required_fields' => array("cn", "mail"),

...

'search_fields' => array('cn', 'mail'),

'sort' => array('cn', 'mail'),

...

'filter' => '(objectClass=mailrecipient)',

...

'fieldmap' => Array(

// Roundcube => LDAP

'name' => 'cn',

'email:primary' => 'mail',

'email:alias' => 'alias',

),

),

...

);

$rcmail_config['autocomplete_addressbooks'] = Array(

'kolab_addressbook',

'kolab_resources',

);

Here, the new entry for kolab_resources augments the existing kolab_addressbook entry (not shown), and it changes the nature of the search by modifying the base_dn to refer to the “Resources” organisational unit, where Kolab puts all the resources in the LDAP store. Since resources do not seem to provide various fields that people provide, some changes are also required to indicate which fields are provided, and in the fieldmap section the name expected by Roundcube is mapped to the cn provided by the LDAP store, thus enabling the name of each resource to appear alongside the mail address by which the resource is known.

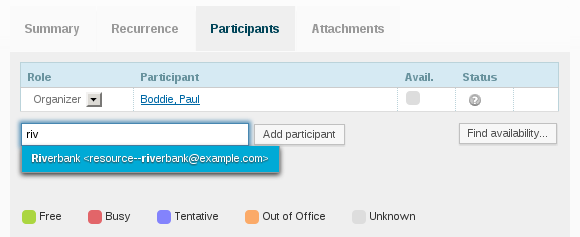

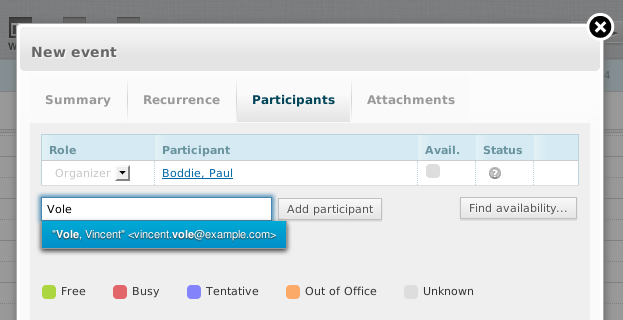

With the new entry added, the autocomplete_addressbooks setting needs to be updated to include this new source of data in any future searching operations. And with that, it should be possible to specify a resource and have it autocompleted in Roundcube:

Evaluating Free Software Groupware: Kolab

June 13th, 2013

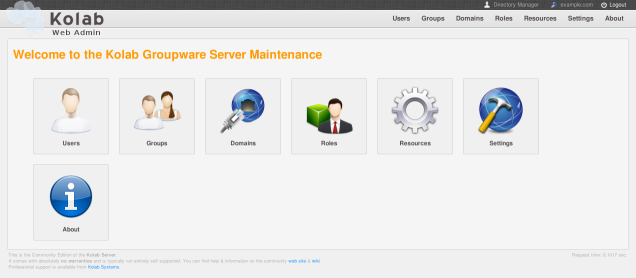

I have recently had the inclination to evaluate Free Software groupware solutions in more detail, and perhaps the first that came to mind was Kolab: a long-running project that provides a range of groupware functions including e-mail, calendaring, address books, task management, and various other functions for a fairly wide range of organisation sizes. Of course, there are plenty of Free Software groupware projects offering complete and integrated solutions as well as individual components for use with existing infrastructure; the Debian Wiki page on groupware provides a fair (but probably incomplete) overview of the more interesting projects.

Installing and Configuring Kolab

Intrigued by accounts that Kolab is fairly easy to install on Debian Wheezy – the latest stable release of the Debian GNU/Linux software distribution – I set out to investigate, making use of my own tools to set up a User Mode Linux environment in which I could install the software. Initially, I tried to re-use an existing virtual environment, but a quick attempt to configure the software using the setup-kolab program was not successful, and a brief excursion via the #kolab IRC channel (on freenode), indicated that I might be better off starting with a completely fresh installation of Wheezy. Although I imagine it is possible to deal with the problems I encountered – setup-kolab did not like the presence of an existing LDAP server – the easiest way to troubleshoot is to start with a known configuration and see if things can be made to work from there.

Installation of Kolab 3.0 on Debian is fairly straightforward, as described both in the manual and more concisely in the blog article mentioned above (and also in older reports). The Kolab packages in Debian are set up to prefer the postfix packages to the apparent default of the exim4 packages and thus want to replace the latter. This might be a problem in some environments, and it may be possible to retain Exim for use with Kolab, but I haven’t investigated this. A somewhat undesirable feature of the currently available packages is that they are unsigned: Debian makes extensive use of package signatures to prevent tampering, and although it can be an annoyance to sign and publish packages and to publish the necessary keys for verification, hopefully Kolab will make its way into Debian as a collection of official packages once again.

Some Current Pitfalls

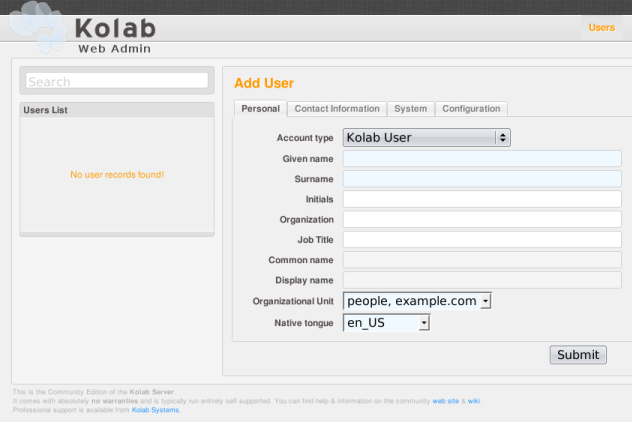

With a fresh system, setup-kolab seems fairly happy, and with the initial configuration performed it is possible to log into the administration interface, although it seems to be necessary to explicitly start the Apache server first. One strange problem with the Debian packages seems to be in the absence of a library file in the correct location, and this manifests itself in the administration interface as the absence of any way to add users. I fixed this for my system as follows:

ln -s /usr/lib/i386-linux-gnu/nss/libsoftokn3.so /usr/lib/libsoftokn3.so

(Unlike the message linked above describing this fix, I still use a machine with the i386 architecture, not the x86_64 architecture, and the underlying problem seems to be related to the way that libraries are now stored to permit support multiple architectures on the same computer.)

I also noticed that some Kolab component, at least after some administrative tasks have been performed, tries to communicate with the IMAP server unsuccessfully but persistently. To reset their relationship, the following seemed to be required:

service cyrus-imapd restart

Some other complaints emerged on the console about mailbox creation, perhaps due to some resources I created, but it is possible to verify the state of the mailboxes as follows:

kolab list-mailboxes

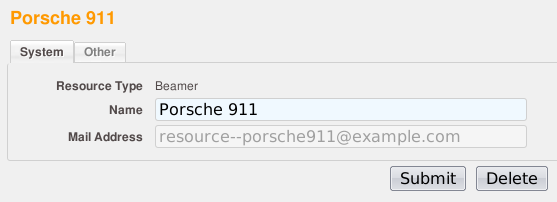

I noticed that no matter which resource type I specified, the type of created resources would always be “Beamer”.

This probably doesn’t matter so much for actual resource booking, but I imagine that there’s a problem here needing to be fixed. It is possible that the Debian packages suffer from the above problems but that these problems have since been fixed in the project’s repository and in subsequent non-Debian package or distribution releases; I haven’t verified this, however.

Fun With Administration

Administration is never really much fun, but the administrative interface seems to provide a reasonable way of adding users and resources, populating the different information stores with user and mailbox details.

With the packaging issues mentioned above all sorted out, users can be added in the users section:

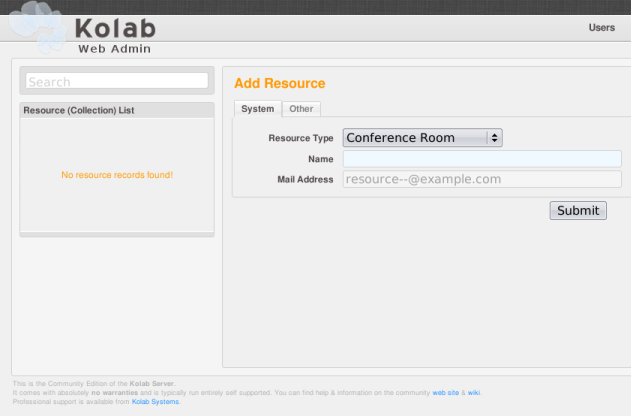

And resources can be added in the resources section:

Given that Kolab is based on conventional services like LDAP directories, IMAP mailboxes, and so on, if you needed to integrate with existing infrastructure and accommodate existing user populations, you probably wouldn’t spend much time in the administrative interface, but it is nice to see that an interface exists for quick edits to the system.

What About the Users?

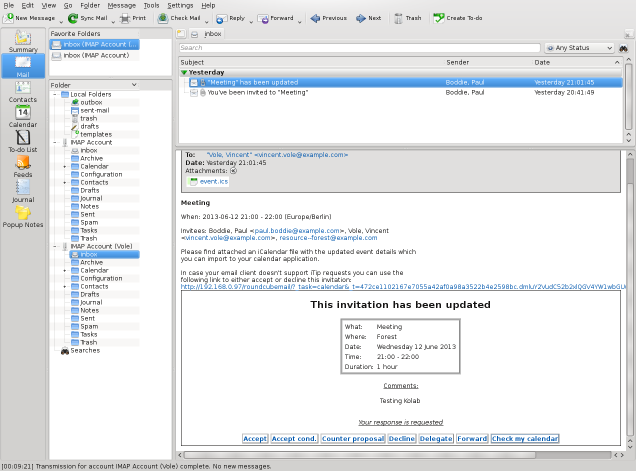

With some users set up, one might be interested in seeing things from their perspective. Out of the box, the Debian packages provide a Roundcube webmail interface:

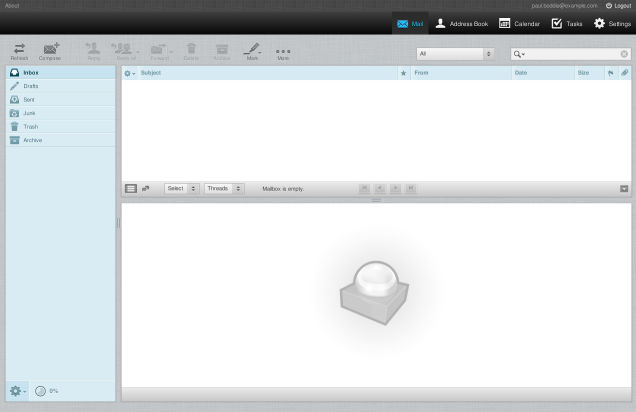

On the inside, the interface is much like the Roundcube many people have come to know. For instance, the mail interface is more or less what you would expect. Here, the folders on the left are IMAP folders that are also available to IMAP clients, but to start with there obviously aren’t any mails to look at:

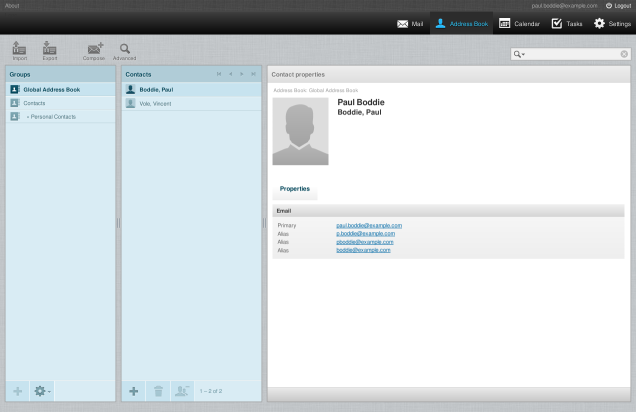

Amongst the usual view buttons at the top of the window, featuring the mail, address book and settings, we find additional buttons for the calendar and tasks. First, the address book:

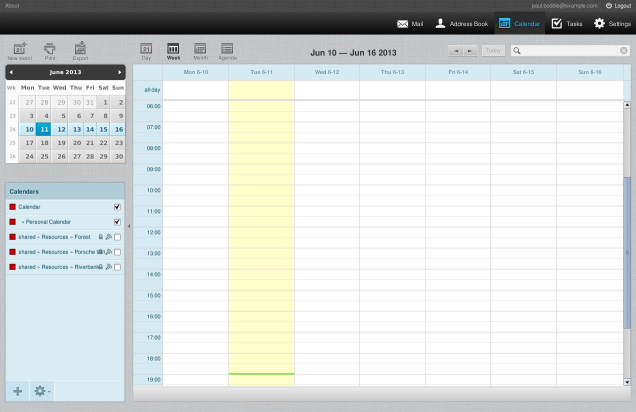

Here, it seems to pick up other users added via the administrative interface. Meanwhile, the calendar interface is probably slightly more interesting to look at because it’s something that you don’t usually get in Roundcube:

The calendar widgets seem to be rather familiar and those who do more JavaScript programming than I do will probably be able to identify the project that pioneered them. Nevertheless, they seem to behave mostly as I would expect from having used them elsewhere on other sites and services. One strange thing is the date numbering above the days in the week view (“Mon 6-10” meaning “Monday 10th June”, for example) which I imagine could be customised somewhere, although I didn’t see a setting to do exactly that.

Fun With Events

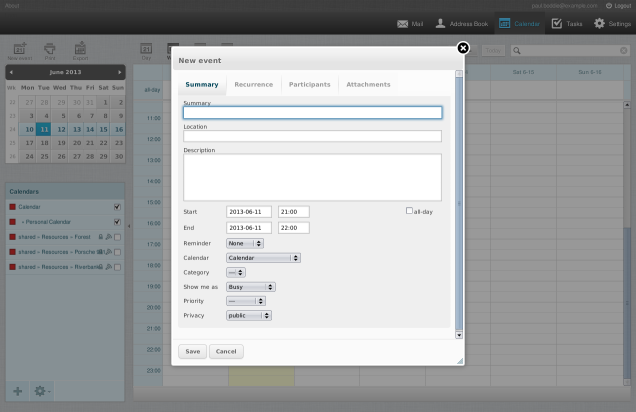

Given the existence of the calendar in Roundcube, and given that calendaring interests me already, I decided to make an attempt at creating a new event, inviting a participant, and requesting a resource. Dragging an area in the calendar caused the event dialogue to appear:

The location field appears to be non-autocompleted free text, but it would be nice to have a menu of recognised locations or resources, and perhaps there is some kind of setting or extension to provide that. With the main details filled out, on I went to the participants tab:

Just like the mail interface in Roundcube, the calendar also supports address lookups and offers autocompletion of names. However, I found that autocompletion didn’t take place for resources, so I ended up having to invite resources by using their full e-mail addresses (which were defined previously in the administrative interface). For example, for the “Forest” resource, I had to specify resource--forest@example.com as a participant. Maybe this is also something that should be done another way, but I didn’t manage to figure it out.

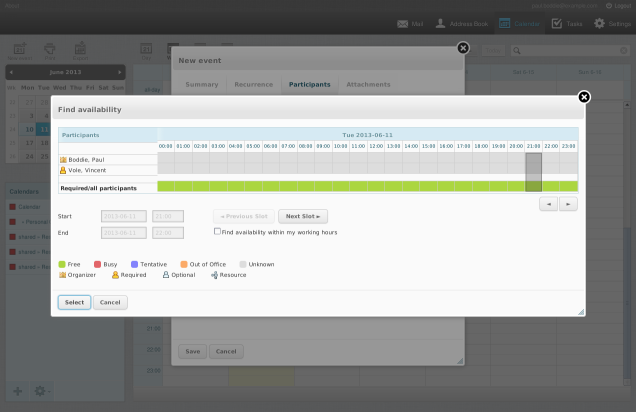

Finding the availability of participants seems possible. Kolab does support the retention of free/busy information, so for those people making this information available to Kolab, their status should be visible in the user interface:

In principle, it should be possible for people to exchange free/busy information via e-mail and for the recipient to record this information and use it to schedule events, but I haven’t looked into whether Kolab or Roundcube support this at their respective levels. I found that in the availability view, it is possible to change the role of each participant by clicking on the icon next to their name, and this made it possible to give a resource the appropriate role. Again, if there were a better way of choosing a resource that I missed, maybe this wouldn’t be necessary.

With an event created and participants invited, Kolab manages to notify those participants, and to make things interesting I decided to configure Kontact in a KDE 4 environment (running in Debian Squeeze) to connect on behalf of the invited participant. Here is what that participant sees when they check their mail:

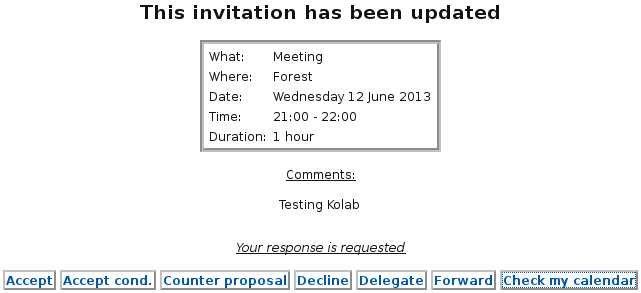

Although it is rather small in the above screenshot, Kontact shows a collection of links that allow the recipient to act on an incoming event notification. Here is a close-up:

For Kontact to be able to do this, it appears that the kdepim-groupware package is required, and indeed this functionality supports the iTIP technology mentioned above (here, in an invitation context instead of the free/busy context discussed above). It is important to understand that the open standards underpinning this workflow do not require that everyone have a login to a common server and manipulate information on that server directly: a critical feature of the iCalendar-related standards is that people are able to schedule events collaboratively without all being part of the same monolithic organisation and/or infrastructure. It is also interesting to see that where a recipient’s e-mail program cannot handle the workflow defined by iTIP, the message includes a link to the Roundcube webmail that can be used to signal a participant’s attendance or absence.

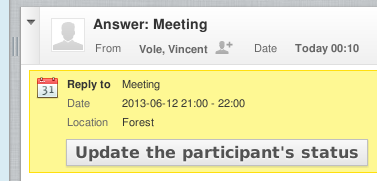

When a participant responds using one of the links provided in the message, the organiser gets a notification. Here, the Roundcube user gets to see a mail message telling them that the participant accepted the invitation:

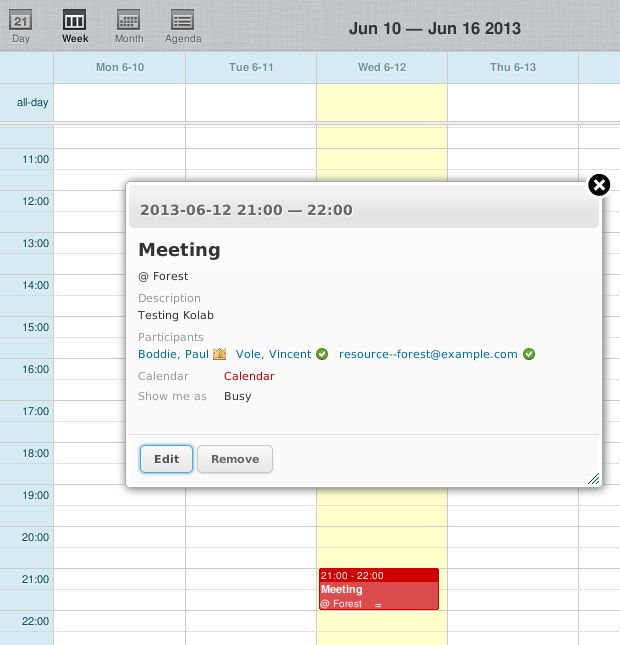

Upon pressing the update button provided, the status of the event is updated in the calendar:

Here, the organiser is shown with a crown next to his name, the participant (using Kontact) has accepted the invitation to the event, and the resource has apparently been secured.

In Conclusion

There are obviously plenty of other experiments that could be performed here, as well as other features that could be explored. For instance, some more evaluation of the free/busy information, how local and remote users interact with it, and how well those with non-iTIP mail clients fare with over-the-Web notification of attendance or absence might be in order. Publishing calendars for over-the-Web consumption is also apparently supported, and it might be interesting to see how well Kolab supports the general “invite people you hardly know” event-planning paradigm that the likes of Doodle have been attempting to popularise.

It seems that Kolab at the very least supports basic calendar functionality in association with standards-compatible clients, and perhaps a brief investigation with Thunderbird (plus Lightning) and even more elementary mail and calendar clients might be informative. Since Kolab is Free Software, of course, the chances of resolving any shortcomings are increased for those willing and able to peruse and modify the code, and maybe I will take a closer look at that, too.

As noted above, calendaring and scheduling systems are already an interest of mine. The only problem now is that there’s just so much to look at and yet so little time to do so!

Site Licences and Volume Licensing: Locking You Both In… and Out

June 9th, 2013

Once upon a time, back in the microcomputer era, if you were a reputable institution and were looking to acquire software it was likely that you would end up buying proprietary software, mostly because Free Software was not a particularly widely-known concept, and partly because any “public domain” or “freeware” programs probably didn’t give you or your superiors much confidence about the quality or maintenance of those programs, although there were notable exceptions on some platforms (some of which are now Free Software). As computers became more numerous, programs would be used on more and more computers, and producers would take exception to their customers buying a single “copy” and then using it on many computers simultaneously.

In order to avoid arguments about common expectations of reasonable use – if you could copy a program onto many floppy disks and run that program on many computers at once, there was obviously no physical restriction on the use of copies and thus no apparent need to buy “official” copies when your computer could make them for you – and in order to avoid needing to engage in protracted explanations of copyright law to people for whom such law might seem counter-intuitive or nonsensical, the concept of the “site licence” was born: instead of having to pay for one hundred official copies of a product, presumably consisting of one hundred disks in one hundred boxes with one hundred manuals, at one hundred times the list price of the product, an institution would buy a site licence for up to one hundred computers (or perhaps as many as the institution has, betting on the improbability that the institution will grow tenfold, say) and pay somewhat less than one hundred times the original price, although perhaps still a multiple of ten of that price.

Thus, the customer got the vendor off their back, the vendor still got more or less what they thought was a fair price, and everyone was happy. At least that is how it all seemed.

The Physical Discount Store

Now, because of the apparent compromise made by the vendor – that the customer might be paying somewhat less per copy – the notion of the “volume licence” or “bulk discount” arose: suddenly, software licences start to superficially resemble commodities and people start to think of them just like they do when they buy other things in bulk. Indeed, in the retail sector the average person became aware of the concept of bulk purchasing with the introduction of cash and carry stores, discount stores, and so on: the larger the volume of goods passing through those channels, the bigger the discounts on those goods.

Now, economies of scale exist throughout modern commerce and often for good reason: any fixed costs (or costs largely insensitive to the scale of output) in production and distribution can be diluted by an increased number of units produced and shipped, making the total per-unit cost less; commitments to larger purchases, potentially over a longer period of time, can also provide stability to producers and suppliers and encourage mutually-beneficial and lasting relationships throughout the supply chain. A thorough treatment of this topic is clearly beyond a blog post, but it is worthwhile to briefly explore how savings arise and how discounts are made.

Let us consider a producer whose factory can produce at most a million units of a product every year, it may not seek to utilise this capacity if it cannot be sure that all units will be sold: excess inventory may incur warehouse costs and also result in an uncompetitive product going unsold or needing to be heavily discounted in order to empty those warehouses and make room for more competitive stock. Moreover, the producer may need to reconsider their employment levels if the demand varies significantly, which in some places incurs significant costs both in reduction and expansion. Adding manufacturing capability might not be as easy as finding a spare factory, either. All this additional flexibility is expensive for producers.

However, if a large, well-known retailer like Wal-Mart or Tesco (to name but two that come to mind immediately) comes along and commits to buying most or all of the production, a producer now has more certainty that the inventory will be sold and that it will not be paying people to do nothing or to suddenly have to change production lines to make new products, and so on. Even things like product variations can be minimised by having a single customer or few customers, and this reduces costs for the producer still further. Naturally, Wal-Mart would expect some of the savings to be passed on to them, and so this relationship benefits both parties. (It also produces a potential discount to be passed on to retail customers who may not be buying in bulk after all, but that is another matter.)

The Software Discount Store?

For software, even though the costs of replication have been driven close to nothing, the production of software certainly has a significant fixed cost: the work required to develop a viable product in the first place. Let us say that an organisation wishes to make and sell a non-niche product but needs to employ fifty people for two years to do so (although this would have been almost biblical levels of manpower for some successful software companies in the era of the microcomputer); thus one hundred person-years are invested in development. To just remain in business while selling “copies” of the software, one might need to sell one hundred thousand individual copies. That is if the company wants to just sell “licences” and not do things like services, consulting, paid support, and so on.

Now, the cost of each copy can be adjusted according to the number of sales. If things go better than expected, the prices could be lowered because the company will cover its costs more quickly than anticipated, but they may also raise the prices to take advantage of the desirability of the product. If things go worse than expected, the prices might be raised to bring in more revenue per sale, but such pricing decisions also have to consider the customer reaction where an increased price turns away customers who can no longer justify the expense. In some cases, however, raising the price might make the product seem more valuable and make it more attractive to potential customers, despite the initial lack of interest from such customers.

So, can one talk about economies of scale with regard to software as if it were a physical product or commodity? Not really. The days of needing to get more disks from the duplicator, more manuals from the printer, and to send more boxes to distributors are over, leaving the bulk of the expense in employing people to get the software written. And all those people developing the product are not producing more units by writing more code or spending more time in the office. One can argue that by adding more features they are generating more sales, but it is doubtful that the relationship between features and sales is so well defined: after a while, a lot of the new features will be superfluous for all but “power users”. One can also argue that by adding more features they are making the product seem more valuable, and so a higher price can be justified. To an extent this may be the case, but the relationship between price and sales is not always so well defined, either (despite attempts to do so). But certainly, you do not need to increase your “production capacity” to fulfil a sales need: whether you make one hundred or one million sales (or generate a tenth of or ten times the anticipated revenue) is probably largely independent of how many people were hired to write the code.

But does it make sense to consider bulk purchasing of software as a way of achieving savings? Not really. Unlike physical production, there is no real limit to how many units are sold to customers, and so beyond a certain threshold demanded by profitability, there is no need for anyone to commit to purchasing a certain number of units. Especially now that a physical component of a software product is unlikely to be provided in any transaction – the software is downloaded, the manual is downloaded, there is no “retail box”, no truck arriving at the customer, no fork-lift offloading pallets of the product – there is also no inventory sitting in a warehouse going unsold. It might be nice if someone paid a large sum of money so that the developers could keep working on the product and not have to be moved to some other project, but the constraints of physical products do not apply so readily here.

Who Benefits from Volume Licensing?

It might be said, then, that the “economies of scale” argument starts to break down when software is considered. Producers can more or less increase supply at will and at a relatively low cost, and they need only consider demand in order to break even. Beyond that point, everything is more or less profit and they deliver units at no risk to themselves. Certainly, a producer could use this to price their products aggressively and to pass on considerable savings to customers, but they have no obligation and arguably little inclination to do so for profitability reasons alone. Indeed, they probably want to finance new products and therefore need the money.

When purchasers of physical goods choose to buy in bulk, they do so to get access to savings passed on by the producer, and for some categories of products the practice of committing larger sums of money to larger purchases carries little risk. For example, an organisation might buy a larger quantity of toilet paper than it normally would – even to the point of some administrator complaining that “this must be more than we really need!” – and as long as the organisation had space to store it, it would surely be used over time with very little money wasted as a result.

But for software, any savings passed on by the producer are more discretionary than genuine products of commerce, and there is a real risk of buying “more than we really need”: a licence for an office application will not get “used up” when someone has “reached the end” of another licence; overspending on such capacity is just throwing money away. It is simply not in the purchaser’s interest to buy too many licences.

Now, software producers have realised that their customers are sensitive to this issue. Presumably, the notion of the site licence or “volume licensing” arose fairly quickly: some customers may have indicated that their needs were not so well-defined that they could say that they needed precisely one hundred copies of a product, and besides, their computer users might not have all been using the software at the same time, and so it might not make sense to provide everyone with a copy of a program when they could pass the disks around (or in later times use “floating licences”). So, producers want customers to feel that they are getting value for money and not spending too much, and thus the site licence was presumably offered as a way of stopping them from just buying exactly what they need, instead getting them to spend a bit more than they might like, but perhaps a bit less than they would need to if money were no object and per-unit pricing was the only thing on offer. (The other way of influencing the customer is, of course, the threat of audits by aggressive proprietary software organisations, but that is another matter.)

Regardless of the theory and the mechanisms involved, do customers benefit from site licences? Well, if they spend less on a site licence than they do on the list price of a product multiplied by the number of active users of that product, then they at least benefit from savings on the licensing fees, certainly. However, there are other factors involved, introducing other broader costs, that we will return to in a moment.

Do producers benefit from site licences? Almost certainly. They allow companies to opportunistically increase revenue by inviting customers to spend a bit more for “peace of mind” and convenience of administration (no more having to track all by yourself who is using which product and whether too many people are doing so because a “helpful” company will take care of it for you). If such a thing did not exist, customers would probably choose to act conservatively and more closely review their purchases. (Or they might just choose to embrace Free Software instead, of course.)

All You Won’t Eat

But it is the matter of what the customer needs that should interest us here. If customers did need to review their purchases more closely, they might find it hard to justify spending large sums on volume licences. After all, not everyone might be in need of some product that can theoretically be rolled out to everyone. Indeed, some people might prefer another product instead: it might be much more appropriate for their kind of work, or it might work better on their platform (or even actually work on their platform where the already-bought product does not).

And where the organisation’s purse strings are loosened when buying a site licence for a product in the first instance, the organisation may not be so forthcoming with finance to acquire other products in the same domain, even if there are genuine reasons for doing so. “You already have an office program you can use; why do you want us to buy another?” Suddenly, instead of creating opportunities, volume licensing eliminates them: if the realm of physical products worked like this, Tesco would offer only one brand of toilet paper and perhaps not even a particularly pleasant one at that!

But it doesn’t stop there. Some vendors bundle products together in volume licensing deals. “Why not indulge yourself with a package of products featuring the ones you want together with some you might like?” This is what customers are made to ask themselves. Suddenly, the justification for acquiring a superior product from a competitor of the volume licensing provider is subject to scrutiny. “You already have access to an intranet solution; why do you want us to spend time and money on another?” And so the supposedly generous site licence becomes a mechanism to rein in spending and even the mere usage of alternatives (which may be Free Software acquired at no cost), all because the acquisition cost of things that people are not already actively using are wrongly perceived as being “free”. “Just take advantage of the site licence!” is what people are told, and even if the alternatives are zero cost, the pressure will still be brought to bear because “we paid for things we could use, so let’s use them!”

And the Winner is…

With such blinkered thinking the customer can no longer sensibly exercise choice: it becomes too easy to constrain an organisation’s strategy based on what else is in the lucky dip of products included in the multiple product volume licensing programme. Once one has bought into such a scheme, there is a disincentive to look elsewhere for other solutions, and soon every need to be satisfied become phrased in terms of the solutions an organisation has already “bought”. Need an e-mail system? The solution now has to be phrased in terms of a single vendor’s product that “we already have”. And when such extra purchases merely add to proprietary infrastructure with proprietary dependencies, that supposedly generous site licence is nothing but bait on the end of the vendor’s fishing line.

We know who the real winner is here. The real loser is anyone having to compete with such schemes, especially anyone using open standards in their products, particularly anyone delivering Free Software using open standards. Because once people have paid good money for something, they will defend that “investment” even when it makes no real sense: this is basic human psychology at work. But the customer is the loser, too: what once seemed like a good deal will just result in them throwing good money after bad, telling themselves that it’s the volume of usage – the chance to sample everything at the “all you can eat” buffet – that makes it a “good investment”, never mind that some of the food at the buffet is unhealthy, poor quality, or may even make people ill.

The customer becomes increasingly “locked in”, unable to consider alternatives. The competition becomes “locked out”, unable to persuade the customer to migrate to open-standards-based solutions or indeed anything else, because even if the customer recognised their dependency on their existing vendor, the cost of undoing the mess might well be less predictable and less palatable than a subscription fee to that “preferred” vendor, appearing as an uncomfortably noticeable entry in the accounts that might indicate strategic ineptitude or wrongdoing – that a mistake has been made – which would be difficult to acknowledge and tempting to conceal. But when the outcome of taking such uncomfortable remedial measures would be lower costs, truly interoperable systems and vastly increased choice, it would be the right thing to do.

One might be tempted to just sit back and watch all this unfold, especially if one has no connection with any of the organisations involved and if the competition consists only of a bunch of proprietary software vendors. But remember this: when the customer is spending your tax money, you are the loser, too. And then you have to wonder who apart from the “preferred” vendor benefits from making you part of the losing team.

Horseplay in Public Procurement? “Standards!”

June 6th, 2013

There is a classic XKCD comic strip where the programmer, “slacking off” in the office and taking a break from doing work, clearly engaging in horseplay, issues the retort “Compiling!” to get his supervisor or peers off his back. It is seen as the ultimate excuse for not doing one’s work, immediately curtailing any further investigation of what really is going on in the corridor. Having recently been investigating some strategic public sector purchasing decisions, it occurred to me that something similar is going on in that area as well.

There’s an interesting case that came up a few years ago: Oslo municipality sought to acquire infrastructure for e-mail and related functionality. The scope of the tender covered “at least 30000 accounts” for client and server software, services and assistance, which is a pretty big tender but not unexpected given that the municipality is one of the largest single employers in Norway with almost 50000 employees (more statistics available here). Unfortunately, the additional documents are no longer available (and are generally not publicly available at the state procurement portal – you have to register as an interested party), but they are quoted in various places. Translating one particular requirement…

“Oslo municipality has standardised on Microsoft Office as office productivity software. It is therefore expected that solutions use MS Outlook 2003 and later as client.”

Two places where the offending requirements are reproduced are in complaints to the state procurement panel: 2009/124 and 2009/153. In these very similar complaints, it is pointed out that alternatives to Outlook can be offered as options (this is in the original tender), but that the municipality would only test proposed solutions with Outlook. As justification for insisting on Outlook compatibility, the municipality claimed that they had found “six different large companies providing relevant software in connection with the drafting of the requirements… all of which can be used together with Outlook”, and thus there was a basis for real competition. As a result, both complaints were rejected.

The Illusion of Compatibility

Now, one might claim that it is perfectly reasonable to want to acquire systems that work with the ones you already have. It is a bit like saying, “I’ve bought all this riding equipment: of course I want a horse!” The deeper issue here is whether anyone should be allowed to specify product compatibility to limit competition. In other words, when you just need transport to get around, why have you made your requirements so specific that you will only ever be getting a horse?

It is all very well demanding compatibility with a specific product, but when the means by which compatibility can be achieved are controlled by the vendor of that product, it is never going to be a fair competition for anyone trying to provide compatibility for their own separate products and solutions, especially when the vendor of the specified product is known to have used compatibility breakage to deliberately undermine the viability of competitors’ products. One response to this pitfall is to insist that those writing procurement tenders specify standards instead of products and that these standards must be genuinely open and not de-facto proprietary standards.

Unfortunately, the regulators of procurement do not seem to go even this far. The Norwegian government states that public sector institutions must support various standards, although the directorate concerned appears to have changed these obligations from the original directive and now insists that the dubious, forcibly- and incompletely-standardised Office Open XML document format must be accepted by the public sector in communications; they have also weakened the Internet publishing requirements for public sector institutions by permitting the use of various encumbered, cartel-controlled audio and video formats. For these changes, entertained in a review process, we can thank the likes of Statistics Norway who wanted “Word format” as well as OOXML to be permitted in the list of acceptable “standards”.

In any case, such directives only cover the surface of public sector activity, and the list of standards do not in general cover anything more than storage and interchange formats plus basic communications standards. This leaves quite a gap where established Internet standards exist but are not mandated, thus allowing proprietary protocols and technologies to insert themselves into infrastructure and pervert the processes of procurement and systems integration.

The Pretense of “Standards!”

But even if open standards were mandated in the public sector – a worthy and necessary measure – that wouldn’t mean that our work to ensure a level playing field – fairness in procurement – would be done. Because vendors can always advertise compliance with standards, they can still insist that their products be considered in any procurement contest, and even if those products do notionally support standards it does not mean that they will end up using them when deployed. For example, from the case of the Oslo municipality e-mail system, the councillor with responsibility for finance and development indicated the following:

“Oslo municipality is a complicated and comprehensive organisation and must take existing integration with specialist/bespoke systems into account. A procurement of other [non-Microsoft] end-user software will therefore result in unnecessary increases in costs for the municipality.”

In other words, even if existing software was acquired under the pretense that it supported standards, in deployment it may actually only function with other software using proprietary mechanisms, and the result of this is that newly-acquired software must also support these proprietary mechanisms. And so, a proprietary infrastructure grows, actively repelling components that employ open standards, with its custodians insisting that it is the fault of standards-compliant software that such an infrastructure would need to be dismantled almost in its entirety and replaced if even one standards-compliant component were to be admitted.

Who benefits the most from this? The vendor peddling the proprietary platforms and technologies that enable this morass of interdependency, of course. Make no mistake: any initial convenience promised by such a vendor fades away when the task of having to pursue an infrastructure strategy not dictated by outside interests is brought to bear on the purchaser. But such tasks are work, of course, and if there’s a way of avoiding it and insisting it doesn’t need attending to, a distraction can always be found.

And so, the horseplay continues under the excuse of “Standards!” when there is no real intent to uphold them or engage in the real work of maintaining a sustainable infrastructure that does not exclude open competition or channel public money to preferred vendors. Unlike the character in the comic strip whose code probably is still compiling, certain public sector institutions would have experienced a compilation error and be found out. It appears, unfortunately, that it is our job to peer around the cubicle partition and see what is happening on screen and perhaps to investigate the noises coming from the corridor. After all, our institutions don’t seem to be particularly concerned about doing so.

Where Now for the Free Software Desktop?

May 31st, 2013

It is a recurring but tiresome joke: is this the year of the Linux desktop? In fact, the year I started using GNU/Linux on the desktop was 1995 when my university department installed the operating system as a boot option alongside Windows 3.1 on the machines in the “PC laboratory”, presumably starting the migration of Unix functionality away from expensive workstations supplied by Sun, DEC, HP and SGI and towards commodity hardware based on the Intel x86 architecture and supplied by companies who may not be around today either (albeit for reasons of competing with each other on razor-thin margins until the slightest downturn made their businesses non-viable). But on my own hardware, my own year of the Linux desktop was 1999 when I wiped Windows NT 4 from the laptop made available for my use at work (and subsequently acquired for my use at home) and installed Red Hat Linux 6.0 over an ISDN link, later installing Red Hat Linux 6.1 from the media included in the official boxed product bought at a local bookstore.

Back then, some people had noticed that GNU/Linux was offering a lot better reliability than the Windows range of products, and people using X11 had traditionally been happy enough (or perhaps knew not to ask for more) with a window manager, perhaps some helper utilities to launch applications, and some pop-up menus to offer shortcuts for common tasks. It wasn’t as if the territory beyond the likes of fvwm had not already been explored in the Unix scene: the first Unix workstations I used in 1992 had a product called X.desktop which sought to offer basic desktop functionality and file management, and other workstations offered such products as CDE or elements of Sun’s Open Look portfolio. But people could see the need for something rather more than just application launchers and file managers. At the very least, desktops also needed applications to be useful and those applications needed to look, act like, and work with the rest of the desktop to be credible. And the underlying technology needed to be freely available and usable so that anyone could get involved and so that the result could be distributed with all the other software in a GNU/Linux distribution.

It was this insight – that by giving that audience the tools and graphical experience that they needed or were accustomed to, the audience for Free Software would be broadened – that resulted first in KDE and then in GNOME; the latter being a reaction to the lack of openness of some of the software provided in the former as well as to certain aspects of the technologies involved (because not all C programmers want to be confronted with C++). I remember a conversation around the year 2000 with a systems administrator at a small company that happened to be a customer of my employer, where the topic somehow shifted to the adoption of GNU/Linux – maybe I noticed that the administrator was using KDE or maybe someone said something about how I had installed Red Hat – and the administrator noted how KDE, even at version 1.0, was at that time good enough and close enough to what people were already using for it to be rolled out to the company’s desktops. KDE 1.0 wasn’t necessarily the nicest environment in every regard – I switched to GNOME just to have a nicer terminal application and a more flexible panel – but one could see how it delivered a complete experience on a common technological platform rather than just bundling some programs and throwing them onto the screen when commanded via some apparently hastily-assembled gadget.

Of course, it is easy to become nostalgic and to forget the shortcomings of the Free Software desktop in the year 2000. Back then, despite the initial efforts in the KDE project to support HTML rendering that would ultimately produce Konqueror and lead to the WebKit family of browsers, the most credible Web browsing solution available to most people was, if one wishes to maintain nostalgia for a moment, the legendary Netscape Communicator. Indeed, despite its limitations, this application did much to keep the different desktop environments viable in certain kinds of workplaces, where as long as people could still access the Web and read their mail, and not cause problems for any administrators, people could pretty much get away with using whatever they wanted. However, the technological foundations for Netscape Communicator were crumbling by the month, and it became increasingly unsupported and unmaintained. Relying on a mostly proprietary stack of software as well as being proprietary itself, it was unmaintainable by any community.

Fortunately, the Free Software communities produced Web browsers that were, and are, not merely viable but also at the leading edge of their field in many ways. One might not choose to regard the years of Netscape Communicator’s demise as those of crisis, but we have much to be thankful for in this respect, not least that the Mozilla browser (in the form of SeaMonkey and Firefox) became a stable and usable product that did not demand too much of the hardware available to run it. And although there has never been a shortage of e-mail clients, it can be considered fortunate that projects such as KMail, Kontact and Evolution were established and have been able to provide years of solid service.

You Are Here

With such substantial investment made in the foundations of the Free Software desktop, and with its viability established many years ago (at least for certain groups of users), we might well expect to be celebrating now, fifteen years or so after people first started to see the need for such an endeavour, reaping the rewards of that investment and demonstrating a solution that is innovative and yet stable, usable and yet reliable: a safe choice that has few shortcomings and that offers more opportunities than the proprietary alternatives. It should therefore come as a shock that the position of the Free Software desktop has not been as troubled as it is now for quite some time.

As time passed by, KDE 1 was followed by KDE 2 and then KDE 3. I still use KDE 3, clinging onto the functionality while it still runs on a distribution that is still just about supported. When KDE 2 came out, I switched back from GNOME because the KDE project had a more coherent experience on offer than bundling Mozilla and Evolution with what we would now call the GNOME “shell”, and for a long time I used Konqueror as my main Web browser. Although things didn’t always work properly in KDE 3, and there are things that will never be fixed and polished, it perhaps remains the pinnacle of the project’s achievements.

On KDE 3, the panel responsible for organising and launching applications, showing running applications and the status of various things, just works; I may have spent some time moving icons around a few years ago, but it starts up and everything uses the right amount of space. The “K” (or “start”) menu is just a menu, leaving itself open to the organisational whims of the distribution, but on my near-obsolete Kubuntu version there’s not much in the way of bizarre menu entry and application duplication. Kontact lets me read mail and apply spam filters that are still effective, filter and organise my mail into folders; it shows HTML mail only when I ask it to, and it shows inline images only when I tell it to, which are both things that I have seen Thunderbird struggle with (amongst certain other things). Konqueror may not be a viable default for Web browsing any more, but it does a reasonable job for files on both local disks and remote systems via WebDAV and ssh, the former being seemingly impossible with Nautilus against the widely-deployed Free Software Web server that serves my files. Digikam lets me download, view and tag my pictures, occasionally also being used to fix the metadata; it only occasionally refuses to access my camera, mostly because the underlying mechanisms seem to take an unhealthy interest in how many files there are in the camera’s memory; Amarok plays my music from my local playlists, which is all I ask of it.

So when my distribution finally loses its support, or when I need to recommend a desktop environment to others, even though KDE 3 has served me very well, I cannot really recommend it because it has for the most part been abandoned. An attempt to continue development and maintenance does exist in the form of the Trinity Desktop Environment project, but the immensity of the task combined with the dissipation of the momentum behind KDE 3, and the effective discontinuation of the Qt 3 libraries that underpin the original software, means that its very viability must be questioned as its maintainers are stretched in every direction possible.

Why Can’t We All Get Along?

In an attempt to replicate what I would argue is the very successful environment that I enjoy, I tried to get Trinity working on a recent version of Ubuntu. The encouraging thing is that it managed to work, more or less, although there were some disturbing signs of instability: things would crash but then work when tried once more; the user administration panel wasn’t usable because it couldn’t find a shared library for the Python runtime environment that did, in fact, exist on the system. The “K” menu seemed to suffer from KDE 4 (or, rather, KDE Plasma Desktop) also being available on the same system because lots of duplicate menu entries were present. This may be an unfortunate coincidence, Trinity being overly helpful, or it may be a consequence of KDE 4 occupying the same configuration “namespace”. I sincerely hope that it is not the latter: any project that breaks compatibility and continuity in the fashion that KDE has done should not monopolise or corrupt a resource that actually contains the data of the user.

There probably isn’t any fundamental reason why Trinity could not return KDE 3 to some level of its former glory as a contemporary desktop environment, but getting there could certainly be made easier if everyone worked together a bit more. Having been obliged to do some user management tasks in another environment before returning to my exploration of Trinity, I attempted to set up a printer. Here, with the printer plugged in and me perusing the list of supported printers, there appeared to be no way of getting the system to recognise a three- or four-year-old printer that was just too recent for the list provided by Trinity. Returning to the other environment and trying there, a newer list was somehow obtained and the printer selected, although the exercise was still highly frustrating and didn’t really provide support for the exact model concerned.

But both environments presumably use the same print system, so why should one be better supplied than the other for printer definitions? Surely this is common infrastructure stuff that doesn’t specifically relate to any desktop environment or user interface. Is it easier to make such functionality for one environment than another, or just too convenient to take a short-cut and hack something up that covers the needs of one environment, or is it too much work to make a generic component to do the job and to package it correctly and to test it in more than a narrow configuration? Do the developers get worried about performance and start to consider complicated services that might propagate printer configuration details around the system in a high-performance manner before their manager or supervisor tells them to scale back their ambition?

I remember having to configure network printers in the previous century on Solaris using special script files. I am also sure that doing things at the lowest levels now would probably be just as frustrating, especially since the pain of Solaris would be replaced by the need to deal with things other than firing plain PostScript across the network, but there seems to be a wide gulf between this and the point-and-click tools between which some level of stability could exist and make sure that no matter how old and nostalgic a desktop environment may be perceived to be, at least it doesn’t need to dedicate effort towards tedious housekeeping or duplicating code that everyone needs and would otherwise need to write for themselves.

The Producers (and the Consumers)

One might be inclined to think that my complaints are largely formed through a bitter acceptance that things just don’t stay the same, although one can always turn this around and question why functioning software cannot go on functioning forever. Indeed, there is plenty of software on the planet that now goes about its business virtualised and still with the belief, if the operating assumptions of software can be considered in such a way, that it runs on the same range of hardware that it was written for, that hardware having been introduced (and even retired) decades ago. But for software that has to be run in a changing environment, handling new ways of doing things as well as repelling previously unimagined threats to its correct functioning and reliability, needing a community of people who are willing to maintain and develop the software further, one has to accept that there are practical obstacles to the sustained use of that software in the environments for which it was intended.

Particularly the matter of who is going to do all the hard work, and what incentives might exist to persuade them to do it if not for personal satisfaction or curiosity, is of crucial importance. This is where conflicts and misunderstandings emerge. If the informal contract between the users and developers were taking place with no historical context whatsoever, with each side having expectations of the other, one might be more inclined to sympathise with the complaints of developers that they do all the hard work and yet the users merely complain. Yet in practice, the interactions take place in the context of the users having invested in the software, too. Certainly, even if the experience has not been one of complete, uninterrupted enjoyment, the users may not have invested as much energy as the developers, but they will have played their own role in the success of the endeavour. Any radical change to the contract involves writing off the users’ investment as well as that of the developers, and without the users providing incentives and being able to direct the work, they become exposed to unpredictable risk.

One fair response to the supposed disempowerment of the user is that the user should indeed “pay their way”. I see no conflict between this and the sustainable development of Free Software at all. If people want things done, one way that society has thoroughly established throughout the ages is that people pay for it. This shouldn’t stop people freely sharing software (or not, if they so choose), because people should ultimately realise that for something to continue on a sustainable basis, they or some part of society has to provide for the people continuing that effort. But do desktop developers want the user to pay up and have a say in the direction of the product? There is something liberating about not taking money directly from your customers and being able to treat the exercise almost like art, telling the audience that they don’t have to watch the performance if they don’t like it.

This is where we encounter the matter of reputation: the oldest desktop environments have been established for long enough that they are widely accepted by distributions, even though the KDE project that produces KDE 4 has quite a different composition and delivers substantially different code from the KDE project that produced KDE 3. By keeping the KDE brand around, the project of today is able to trade on its long-standing reputation, reassure distributions that the necessary volunteers will be able to keep up with packaging obligations, and indeed attract such volunteers through widespread familiarity with the brand. That is the good side of having a recognised brand; the bad side is that people’s expectations are higher and that they expect the quality and continuity that the brand has always offered them.

What’s Wrong with Change?

In principle, nothing is essentially wrong with change. Change can complement the ways that things have always been done, and people can embrace new ways and come to realise that the old ways were just inferior. So what has changed on the Free Software desktop and how does it complement and improve on those trusted old ways of doing things?

It can be argued that KDE and GNOME started out with environments like CDE and Windows 95 acting as their inspiration at some level. As GNOME began to drift towards resembling the “classic” Mac OS environment in the GNOME 2 release series, having a menu bar at the top of the screen through which applications and system settings could be accessed, together with the current time and other status details, projects like XFCE gained momentum by appealing to the audience for whom the simple but configurable CDE paradigm was familiar and adequate. And now that Unity has reintroduced the Mac-style top menu bar as the place where application menus appear – a somewhat archaic interface even in the late 1980s – we can expect more users to discover other projects. In effect, the celebrated characteristics of the community around Free Software have let people go their own way in the company of developers who they felt shared the same vision or at least understood their needs best.

That people can indeed change their desktop environment and choose to run different software is a strength of Free Software and the platforms built on it, but the need for people to have to change – that those running GNOME, for example, feel that their needs are no longer being met and must therefore evaluate alternatives like XFCE – is a weakness brought about by projects that will happily enjoy the popularity delivered by the reputation of their “brand”, and who will happily enjoy having an audience delivered by previous versions of that software, but who then feel that they can change the nature of their product in ways that no longer meet that audience’s needs while pretending to be delivering the same product. If a user can no longer do something in a new release of a product, that should be acknowledged as a failing in that product. The user should not be compelled to find another product to use and be told that since a choice of software exists, he or she should be prepared to exercise that choice at the first opportunity. Such disregard for the user’s own investment in the software that has now abandoned him or her, not to mention the waste of the user’s time and energy in having to install alternative software just to be able to keep doing the same things, is just unacceptable.

“They are the 90%”

Sometimes attempts at justifying or excusing change are made by referring to the potential audience reached by a significantly modified product. Having a satisfied group of users numbering in the thousands is not always as exciting as one that numbers in the millions, and developers can be jealous of the success of others in reaching such numbers. One still sees labels like “10x” or “x10” being used and notions of ten-fold increases in audiences as being a necessary strategy phrased in a new and innovative way, mostly by people who perhaps missed such terminology and the accompanying strategic doctrine the first time round (or second, or third time) many years ago, and such order-of-magnitude increases are often dictated by the assumption that the 90% not currently in the audience for a product would find the product too complicated or too technologically focused and that the product must therefore discard features, or change the way it exposes its features, in order to appeal to that 90%.

Unfortunately, such initiatives to reach larger audiences risk alienating the group of users that are best understood in order to reach groups of users that are largely under-researched and thus barely understood at all. (The 90% is not a single monolithic block of identically-minded people, of course, so there is more than one group of users.) Now, one tempting way of avoiding the need to understand the untapped mass of potential users is to imitate those who are successfully reaching those users already or who at least aspire to do so. Thus, KDE 4 and Windows Vista had a certain similarity, presumably because various visual characteristics of Vista, such as its usage of desktop gadgets, were perceived to be useful features applicable to the wider marketplace and thus “must have” features that provide a way to appeal to people who don’t already use KDE or Windows. (Having a tiny picture frame on the desktop background might be a nice way of replicating the classic picture of one’s closest family on one’s physical desktop, but I doubt that it makes or breaks the adoption of a technology. Many people are still using their computer at a desk or at home where they still have those pictures in plain view; most people aren’t working from the beach where they desperately need them as a thumbnail carousel obscured by their application windows.)

However, it should be remembered that the products being imitated may also originate from organisations who also do not really understand their potential audience, either. Windows Vista was perceived to be a decisive response by Microsoft to the threat of alternative platforms but was regarded as a flop despite being forced on computer purchasers. And even if new users adopt such products, it doesn’t mean that they welcome or unconditionally approve of the supposed innovations introduced in their name, especially if Microsoft and its business partners forced those users to adopt such products when buying their current machine.

The Linux Palmtop and the Linux Desktop

With the success of Android – or more accurately, Android/Linux – claims may be made that radical departures from the traditional desktop software stacks and paradigms are clearly the necessary ingredient for success for GNU/Linux on the desktop, and it might be said that if only people had realised this earlier, the Free Software desktop would have become dominant. Moreover, it might also be argued that “desktop thinking” held back adoption of Linux on mobile devices, too, opening the door for a single vendor to define the payload that delivered Linux to the mobile-device-consuming masses. Certainly, the perceived need for a desktop on a mobile or PDA (personal digital assistant) is entrenched: go back a few years and the GNOME Palmtop Environment (GPE) was seen as the counterweight to Windows CE on something like a Compaq iPAQ. Even on the Golden Delicious GTA04 device, LXDE – a lightweight desktop environment – has been the default environment, admittedly more for verification purposes than for practical use as a telephone.

Naturally, a desktop environment is fairly impractical on a small screen with limited navigational controls. Although early desktop systems had fewer pixels than today’s smartphones, the increased screen size provided much greater navigational control and matched the desktop paradigm much better. It is interesting to note that the Xerox Star had a monochrome 1024×809 display, which is perhaps still larger than many smartphones, that (according to the Wikipedia entry) “…was meant to be able to display two 8.5×11 in pages side by side in actual size”. Even when we become able to show the equivalent amount of content on a smartphone screen at a resolution sufficient to permit its practical use, perhaps with very good eyesight or some additional assistance through magnification, it will remain a challenge to navigate that information precisely. Selecting text, for instance, will not be possible without a very precise pointing instrument.

Of course, one way of handling such challenges that is already prevalent is that of being able to zoom in and out of the content, with the focus in recent years on being able to do so through gestures, although the ability to zoom in on documents at arbitrary levels has been around for many years in the computer-aided design, illustration and desktop publishing fields amongst others, and the notion of general user interfaces permitting fluid scrolling and zooming over a surface showing content was already prominent before smartphones adopted and popularised it further. On the one hand, new and different “form factors” – kinds of device with different characteristics – offer improved methods of navigation, perhaps being more natural than the traditional mouse and keyboard attached to a desktop computer, but those methods may not lend themselves to use on a desktop computer with its proven ergonomics of sitting at a comfortable distance from a generously proportioned display and being able to enter textual input using a dedicated device with an efficiency that is difficult to match using, say, a virtual keyboard on a touchscreen.

Proclamations may occasionally be made that work at desks in offices will become obsolete in favour of the mobile workplace, but as that mobile workplace is apparently so often situated at the café table, the aircraft tray table, or once the lap or forearm is tired of supporting a device, some other horizontal surface, the desktop paradigm with its supporting cast of input devices and sophisticated applications will still be around in some form and cannot be convincingly phased out in favour of content consumption paradigms that refuse to tackle the most demanding of the desktop functionality we enjoy today.

The Real Obstacle

Up to this point, my pontification has limited itself to considering what has made the “Linux desktop” attractive in the past, what makes it attractive or unattractive today, and whether the directions currently being taken might make it more attractive to new audiences and to existing users, or instead fail to capture the interest of new audiences whilst alienating existing users. In an ideal world, with every option given equal attention, and with every individual able to exercise a completely free choice and adopt the product or solution that meets his or her own needs best, we could focus on the above topic and not have to worry about anything else. Unfortunately, we do not live in such an ideal world.

Most hardware sold in retail outlets visited by the majority of potential and current computer users is bundled with proprietary software in the form of Microsoft Windows. Indeed, in these outlets, accounting for the bulk of retail sales of computers to private customers, it is not possible to refuse the bundled software and to choose to buy only the hardware so that an alternative software system may be used with the computer instead (or at least not without needing to pursue vendors afterwards, possibly via legal avenues). In such an anticompetitive environment, many customers associate the bundled software with computer hardware in general and remain unaware of alternatives, leaving the struggle to educate those customers to motivated individuals, organisations like the FSF (and FSFE), and to independent retailers.

Unfortunately, individuals and organisations have to spend their own time and money (or their volunteers’ time and their donors’ money) righting the wrongs of the industry. Independent retailers offer hardware without bundled software, or offer Free Software pre-installed as a convenience but without cost, but the low margins on such “bare” or Free Software systems mean that they too are fixing the injustices of the system at their own expense. Once again, our considerations need to be broadened so as to not merely consider the merits of Free Software delivered as a desktop environment for the end-user, but also to the challenge of getting that software in front of the end-user in the first place. And further still: although we might (and should) consider encouraging regulatory bodies to investigate the dubious practices of product bundling, we also need to consider how we might support those who attempt to reach out and educate end-users without waiting for the regulators to act.

Putting the Pieces Together

One way we might support those getting Free Software onto hardware and into the hands of end-users – in this case, purchasers of new computer hardware – is to help them, the independent retailers, command a better price for their products. If all other things are equal, people generally will not pay more for something that they can get cheaper elsewhere. If they can be persuaded to believe that a product is better in certain ways, then paying a little more doesn’t seem so bad, and the extra cost can often be justified. Sadly, convincing people about the merits of Free Software can be a time-consuming process, and people may not see the significance of those merits straight away, and so other merits are also required to help build up a sustainable margin that can be fed into the Free Software ecosystem.

Some organisations advocate that bundling services is an acceptable way of appealing to end-users and making a bit of money that can fund Free Software development. However, such an approach risks bringing with it all that is undesirable about the illegitimately-bundled software that customers are obliged to accept on their new computer: advertising, compromised user experiences, and the feeling that they are not in control of their purchase. Moreover, seeking revenue from service providers or selling proprietary services to existing end-users fails to seriously tackle the market access issue that impacts Free Software the most.

A better and proven way of providing additional persuasion is, of course, to make a better product, which is why it is crucial to understand whether the different communities developing the “Linux desktop” are succeeding in doing so or not. If not, we need to understand what we need to do to help people offer viable products that people will buy and use, because the alternative is to continue our under-resourced campaign of only partly successful persuasion and education. And this may put our other activities at risk, too, affording us only desperate resistance to all the nasty anticompetitive measures, both political and technical, that well-resourced and industry-dominating corporations are able to initiate with relative ease.

In short, our activities need to fit together and to support each other so that the whole endeavour may be sustainable and be able to withstand the threats levelled against it and against us. We need to deliver a software experience that people will use and continue to use, we need to recognise that those who get that software in front of end-users need our support in running a viable business doing so (far more than large vendors who only court the Free Software communities when it suits them), and we need to acknowledge the threats to our communities and to be prepared to fight those threats or to support those who do so.

Once we have shown that we can work together and act on all of these simultaneously and continuously as a community, maybe then it will become clear that the year of the Linux desktop has at last arrived for good.

What do I think about the Fairphone?

May 26th, 2013

Thomas Koch asks what we think about the Fairphone.

I think that the Fairphone people are generally aware of Free Software and are in favour of it, but I also think that such matters are somewhat beyond their existing experiences, so that’s why the illustration on their site is a phone running something that might be Windows Phone (I wouldn’t really know, myself) showing Skype on the screen.

The rather awkward-to-navigate Web site mentions…

Root access: Install your preferred operating system and take control of your data.

Android OS (4.2 Jelly Bean): Special interface developed by Kwame Corporation (Also open!)

Of course, without a full familiarity with Free Software, it’s very easy for people to say “open” and not deliver what we expect when we consider openness and freedom. Moreover, without proper hardware support, promises of “root access” don’t go very far. It does seem to be the case that the “special interface” will be released as Free Software (more information available on the DroidCon site in a slightly more convenient form than the alternatives). But I haven’t seen much evidence of truly open support for the “Mediatek 6589 chipset” although it is theoretically possible that it could exist. That the MT6589 apparently employs PowerVR technology does not inspire a great deal of hope for Free Software drivers and firmware, however.

Certainly, those of us interested in Free Software should consider helping Fairphone to deliver the same kind of transparency in the software supply chain that they intend to deliver in the physical supply chains. Having software that can in its entirety be maintained independently of the hardware vendor means that the device can be viable indefinitely, and the result would be a product that promotes the sustainability aspirations of the Fairphone endeavour.

I’d be tempted to order one myself if we could realistically expect to bring about Free (and Fair) Software on a Freephone.

The Academic Challenge: Ideas, Patents, Openness and Knowledge

April 18th, 2013

I recently had reason to respond to an article posted by the head of my former employer, the Rector of the University of Oslo, about an initiative to persuade students to come up with ideas for commercialisation to solve the urban challenges of the city of Oslo. In the article, the Rector brought up an “inspiring example” of such academic commercialisation: a company selling a security solution to the finance industry, possibly based on “an idea” originating in a student project and patented as part of the subsequent commercialisation strategy leading to the founding of that company.

My response made the following points:

- Patents stand counter to the academic principle of the dissemination of unencumbered knowledge, where people may come and learn, then make use of their new knowledge, skills and expertise. Universities are there to teach people and to undertake research without restricting how people in their own organisations and in other organisations may use knowledge and thus perform those activities themselves. Patents also act against the independent discovery and use of knowledge in a startlingly unethical fashion: people can be prevented from taking advantage of their own discoveries by completely unknown and inscrutable “rights holders”.

- Where patents get baked into attempts at commercialisation, not only does the existence of such patents have a “chilling effect” on others working in a particular field, but even with such patents starting life in the custody of the most responsible and benign custodians, financial adversity or other circumstances could lead to those patents being used aggressively to stifle competition and to intimidate others working in the same field.

- It is all very well claiming to support Open Access (particularly when snobbery persists about which journals one publishes in, and when a single paper in a “big name” journal will change people’s attitudes to the very same work whose aspects were already exposed without such recognition in other less well-known publications), but encouraging people to patent research at the same time is like giving with one hand while taking with the other.

- Research, development and “innovation” happens more efficiently when people don’t have to negotiate to be able to access and make use of knowledge. For those of us in the Free Software community who have seen how real progress can be made when resources – in our case, software – are freely usable by others through explicit and generous licensing, this is not news. But for others, this is a complete change of perspective that requires them to question their assumptions about the way society currently rewards the production of new work and to question the optimality of the system that grants such rewards.

On the one hand, I am grateful for the Rector’s response, but I feel somewhat disappointed with its substance. I must admit that the Rector is not the first person to favour the term “innovation”, but by now the term has surely lost all meaning and is used by every party to mean what they want it to mean, to be as broad or as narrow as they wish it to be, to be equivalent to work associated with various incentives such as patents, or to be a softer and more photogenic term than “invention” whose own usage may be contentious and even more intertwined with patents and specific kinds of legal instruments.

But looking beyond my terminological grumble, I remain unsatisfied:

- The Rector insists that openness should be the basis of the university’s activities. I agree, but what about freedoms? Just as the term “open source” is widely misunderstood and misused, being taken to mean that “you can look inside the box if you want (but don’t touch)” or “we will tell you what we do (but don’t request the details or attempt to do the same yourself)”, there is a gulf between openly accessible knowledge and freely usable knowledge. Should we not commit to upholding freedoms as well?

- The assertion is made that in some cases, commercialisation may be the way innovations are made available to society. I don’t dispute that sometimes you need to find an interested and motivated organisation to drive adoption of new technology or new solutions, but I do dispute that society should grant monopolies on entire fields of endeavour to organisations wishing to invest in such opportunities. Monopolies, whether state-granted or produced by the market, can have a very high cost to society. Is it not right to acknowledge such costs and to seek more equitable ways of delivering research to a wider audience?

- Even if the Rector’s mention of an “inspiring example” had upheld the openness he espouses and had explicitly mentioned the existence of patents, is it ethical to erect a fence around a piece of research and to appoint someone as the gatekeeper even if you do bother to mention that this has been done?

Commercialisation in academia is nothing new. The university where I took my degree had a research park even when I started my studies there, and that was quite a few years ago, and the general topic has been under scrutiny for quite some time. When I applied for a university place, the politics of the era in question were dominated by notions of competition, competitiveness, market-driven reform, league tables and rankings, with schools and hospitals being rated and ranked in a misguided and/or divisive exercise to improve and/or demolish the supposedly worst-performing instances of each kind.